If you have ever opened a Talend job, you have seen the tMap. It is the Swiss army knife of Talend Studio—a single visual component that handles joins, filters, column transformations, expression logic, and multi-output routing all within one canvas. It is also, by a wide margin, the most complex component to convert when migrating Talend jobs to modern platforms. This article dissects the tMap’s internal structure, explains why automated conversion is both essential and difficult, and shows how MigryX transforms tMap logic into clean PySpark and SQL.

Why the tMap Is the Heart of Every Talend Job

Talend offers dozens of transformation components, but in practice, the tMap dominates. Surveys of enterprise Talend repositories consistently show that 60–80% of all transformation logic lives inside tMap components. This is because the tMap consolidates what would otherwise require five or six separate components:

- Joins: A tMap can join a main input flow with one or more lookup tables using inner, left outer, or full outer join semantics.

- Column mapping: Output columns are defined by dragging input columns to output tables, optionally with transformation expressions applied inline.

- Filters: Each output table can have a filter expression that determines which rows are routed to that output.

- Expression variables: Intermediate calculated values that can be reused across multiple output columns, similar to CTEs in SQL.

- Reject flows: Rows that fail a lookup join can be routed to a separate “reject” output for error handling or logging.

- Multiple outputs: A single tMap can produce two, three, or more output flows, each with different column sets and filter conditions.

This consolidation makes Talend jobs visually compact, but it concentrates enormous complexity into a single XML node.

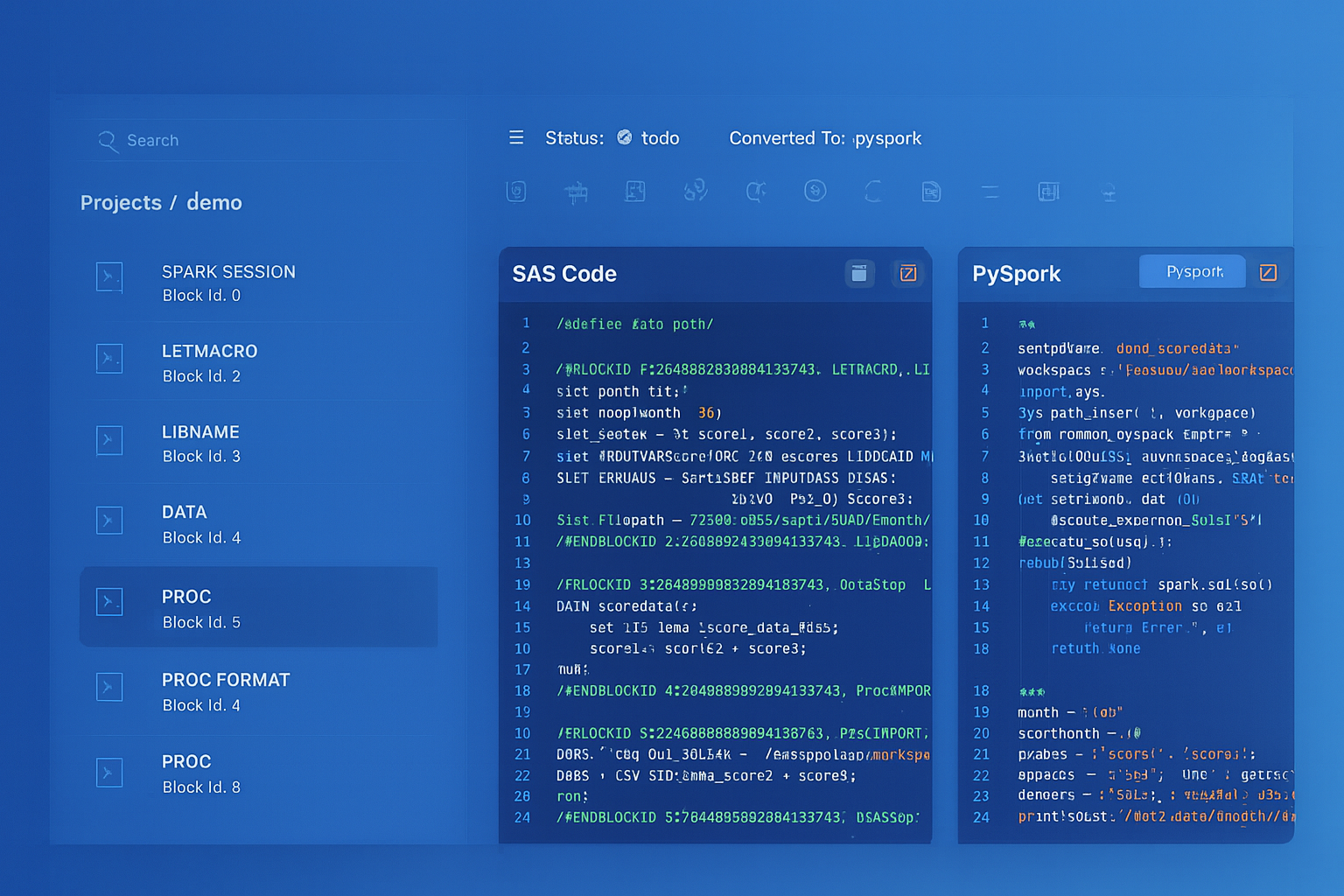

Talend to Apache PySpark migration — automated end-to-end by MigryX

Anatomy of the tMap XML

Under the hood, every tMap is serialized as a deeply nested XML structure within the job’s .item file. Talend stores tMap logic in deeply nested XML—join conditions, expression variables, filter rules, and output routing are all encoded in a proprietary schema that bears no resemblance to the visual designer. Parsing this XML requires understanding dozens of element types and their interactions.

The XML encodes every aspect of the tMap’s behavior: which input is the main flow versus a lookup, the join model for each lookup (unique match, first match, or all matches), how unmatched rows are handled, the filter conditions on each output, the expressions that compute each output column, and the intermediate variables that feed into those expressions. All of this is spread across multiple levels of nesting, with cross-references between elements that must be resolved during parsing.

This proprietary structure is why generic XML-to-code translators fail on tMap conversion. A purpose-built parser that understands the semantics of each element type and the relationships between them is essential for producing correct output code.

MigryX: Purpose-Built Parsers for Every Legacy Technology

MigryX does not rely on generic text matching or regex-based parsing. For every supported legacy technology, MigryX has built a dedicated Abstract Syntax Tree (AST) parser that understands the full grammar and semantics of that platform. This means MigryX captures not just what the code does, but why — understanding implicit behaviors, default settings, and platform-specific quirks that generic tools miss entirely.

The Conversion Challenge: From Visual Canvas to Code

Converting a tMap to PySpark or SQL requires solving several problems simultaneously:

- Expression language translation: Talend expressions use a Java-like syntax with Talend-specific functions (

StringHandling.CHANGE,TalendDate.parseDate,Numeric.sequence). Each function must be mapped to its PySpark or SQL equivalent. - Join reconstruction: The join type, join keys, and match model must be translated into

DataFrame.join()calls or SQLJOINclauses. The tMap’sALL_MATCHESmodel is a standard join;FIRST_MATCHrequires deduplication of the lookup before joining. - Multi-output decomposition: A single tMap with three outputs must become three separate code paths. Each path applies its own filter and selects its own column set, but they all operate on the same joined intermediate result.

- Reject flow handling: Reject outputs capture rows that failed to match a lookup. In PySpark, this is typically implemented with a left anti-join or by tagging rows with a match indicator and filtering afterward.

The Scale of the Challenge

Even a simple tMap with one lookup join, a filter, and a few calculated output columns involves coordinating join semantics, expression translation, column aliasing, and type handling. But production tMaps routinely have 4–6 lookup tables, 20+ output columns with nested expressions, expression variables, and multiple output tables with different filters. Automated parsing is the only practical approach at scale.

From parsed legacy code to production-ready modern equivalents — MigryX automates the entire conversion pipeline

From Legacy Complexity to Modern Clarity with MigryX

Legacy ETL platforms encode business logic in visual workflows, proprietary XML formats, and platform-specific constructs that are opaque to standard analysis tools. MigryX’s deep parsers crack open these proprietary formats and extract the underlying data transformations, business rules, and data flows. The result is complete transparency into what your legacy code actually does — often revealing undocumented logic that even the original developers had forgotten.

Patterns That Make tMap Conversion Extremely Difficult

The simple cases are manageable. The edge cases are where migrations fail. Here are the patterns that require special attention:

Lookup Joins with Filters and Calculated Columns

The most common tMap pattern combines a main flow with one or more lookup joins, applies row-level filters, and computes new columns using Talend’s expression language. Converting this correctly requires simultaneously handling join semantics, expression translation, column resolution across multiple input flows, and filter placement. Getting any one of these wrong produces silently incorrect data. MigryX handles lookup join conversion automatically, generating optimized PySpark that preserves the exact filtering, joining, and column computation semantics.

Reject Flows

Reject flows are particularly tricky—they represent records that failed to match a join condition, requiring anti-join patterns that maintain the original tMap’s filtering semantics. When a tMap lookup is configured to reject unmatched rows, the reject output captures the complement of the join. This seems simple in isolation, but when combined with multiple lookups (each with their own reject handling), expression variables that must be computed differently for matched vs. rejected rows, and downstream components that depend on the reject output, the conversion becomes a multi-dimensional puzzle. MigryX handles reject flow conversion automatically, ensuring that rejected rows match the original Talend behavior exactly.

Multiple Output Tables

A single tMap can route rows to multiple output tables, each with different filter conditions and column selections. A row can appear in zero, one, or multiple outputs depending on the filter logic. Converting this correctly means the joined intermediate result must be computed once and then each output’s filters and column mappings must be applied independently—without introducing subtle ordering dependencies or redundant computation. The complexity multiplies when different outputs have different expression variables or when outputs feed into components with different schema requirements. MigryX handles multi-output tMap conversion automatically, generating optimized PySpark that preserves each output’s distinct filtering and transformation logic.

Expression Variables as Intermediate Calculations

Talend expression variables are computed before output columns and can reference each other in a defined order. They act as intermediate calculations that are reused across multiple output columns or even multiple output tables. Translating these correctly requires preserving the computation order, handling type coercions that Talend performs implicitly, and ensuring that downstream references resolve to the correct intermediate value. This is especially challenging when expression variables use Talend-specific functions that have no direct equivalent in PySpark. MigryX handles expression variable conversion automatically, generating code that preserves the computation order and semantics of the original tMap.

Join Type Variations

The tMap supports inner join, left outer join, and full outer join for each lookup, along with different match models (unique match, first match, all matches). Each combination produces different behavior for duplicate keys, null keys, and unmatched rows. The “first match” model is particularly challenging because it requires deduplication of the lookup input before joining—a step that has no direct visual representation in the tMap designer but must be correctly generated in the output code. MigryX handles all join type and match model combinations, generating the appropriate deduplication and join logic for each.

Preserving Multi-Output Logic in PySpark

One of the trickiest aspects of tMap conversion is preserving the semantics of multi-output tMaps. In Talend, a single tMap processes each input row once and routes it to the appropriate output(s) based on filter conditions. A row can appear in zero, one, or multiple outputs.

For tMaps with multiple output tables, MigryX generates optimized code that avoids redundant computation while preserving each output’s distinct filtering and transformation logic. Each output is derived from the same intermediate result, with its own filter and column selection applied independently.

For jobs where outputs feed into different downstream components (one to a database, another to a file, a third to an API), MigryX generates separate write operations for each output path, maintaining the original job’s data routing topology.

Testing Converted tMap Logic

The tMap is where most data transformation bugs hide, so testing must be thorough. MigryX recommends a three-tier testing approach for converted tMap logic:

Unit Tests: Expression-Level

Each converted expression (column computation, filter condition, join key) should be tested individually. Create small input DataFrames with known values and verify that the output matches the expected result. Pay special attention to null handling—Talend and PySpark handle nulls differently in string concatenation, arithmetic, and comparisons.

Integration Tests: Full tMap Equivalence

Run the original Talend job and the converted PySpark code against the same input dataset. Compare all output tables row by row. For tMaps with reject flows, verify that the same rows appear in the reject output for both the original and converted versions.

Edge Case Tests

Test with empty inputs, null-heavy data, duplicate join keys, and very large datasets. These scenarios often expose differences between Talend’s Java-based processing and PySpark’s distributed execution. For example, Talend processes rows sequentially and maintains a Numeric.sequence() counter across the entire flow; in PySpark, generating monotonically increasing IDs requires monotonically_increasing_id() which does not guarantee sequential values.

The tMap is the single most important component to get right in a Talend migration. If your tMap conversions are accurate, the rest of the job is straightforward. If they are wrong, everything downstream is wrong.

Automating tMap conversion is not a nice-to-have—it is a necessity for any Talend migration at scale. An enterprise with 500 Talend jobs might contain 1,500 or more tMap components, each with unique join logic, expression formulas, and output routing. Manual conversion at that scale would take years and introduce countless errors. Automated parsing, intelligent expression translation, and rigorous testing are the only path to a successful migration.

Why MigryX Is the Only Platform That Handles This Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Deep AST parsing: MigryX’s custom-built parsers achieve 95% accuracy on every supported legacy technology — not through approximation, but through true semantic understanding.

- Merlin AI augmentation: Where deterministic parsing reaches its limit, Merlin AI resolves ambiguities and implicit behaviors, pushing accuracy to 99%.

- Complete coverage: MigryX supports 25+ source technologies including SAS, Informatica, DataStage, SSIS, Alteryx, Talend, ODI, Teradata, and Oracle PL/SQL.

- End-to-end automation: From parsing to conversion to validation — MigryX automates the entire pipeline, not just one step.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to modernize your legacy code?

See how MigryX automates migration with precision, speed, and trust.

Schedule a Demo